self.portrait

This work explores what portraiture becomes when a model can capture not just a single likeness, but the broader latent space of a person.

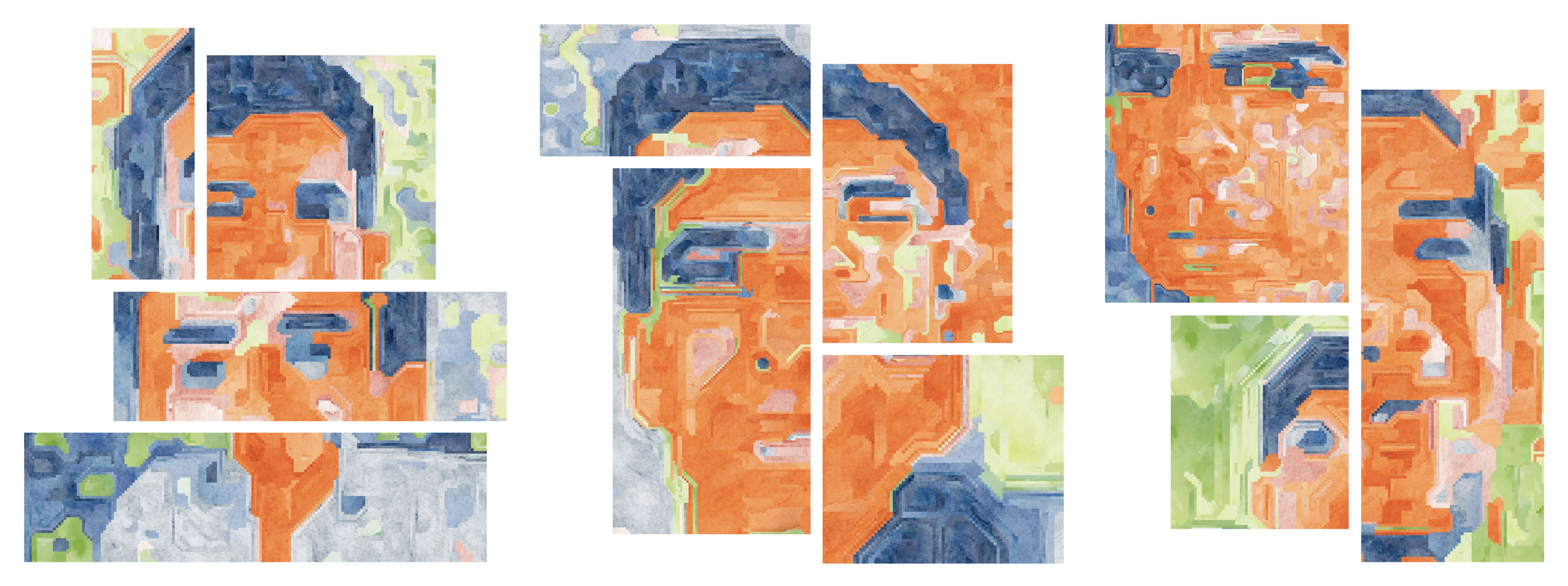

I trained a LoRA on images of myself from the last 10 years, then used it within a custom SDXL-based collage pipeline I created called CollageNet. The system assembles the portrait by searching for corresponding latent space pixels and composing the final image from many visual fragments from a set of reference images. The result is both a self-portrait and a study of how AI reframes identity as something navigable, distributed, and generative.

CollageNet

CollageNet works by intervening during SDXL denoising. At each step, it reads the current latent, segments it into superpixel-like regions, samples candidate windows from a reference latent bank, scores those candidates with masked cosine similarity, and blends the best match back into the latent before continuing diffusion. Once generation is complete, the final assignment map is used to render a sharp collage from the original source images. That reference latent bank is built at runtime from whatever source images the user provides, so the same pipeline can collage essentially any imagery rather than relying on a fixed dataset.

Renders

Small changes to the parameters or the source collage images can produce dramatically different results.

.jpg)

.webp)

.jpg)

.jpg)

.jpg)